Top Vibe-Coding Security Risks

August 29, 2025

13 min read

Software development is changing rapidly: developers increasingly lean on AI assistants to generate working code from simple conversations, boosting speed and productivity. That convenience comes with a cost — many AI-written snippets contain subtle security flaws that can slip into production when developers skip careful review. Below we will review these risks and describe why exactly AI can some times provide a buggy code.

What Is Vibe Coding?

This approach lets programmers describe what they want in plain English and get working code instantly. AI researcher Andrej Karpathyput in circulation this catchy term – “vibe-coding”. As Andrej describes it: developers “fully give in to the vibes, embrace exponentials, and forget that the code even exists.”

There's a new kind of coding I call "vibe coding", where you fully give in to the vibes, embrace exponentials, and forget that the code even exists. It's possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting too good. Also I just talk to Composer with SuperWhisper…

— Andrej Karpathy (@karpathy) February 2, 2025

The numbers speak for themselves. Major tech companies like Google and Microsoft already use AI to generate over 20% of their code. By 2028, 75% of enterprise software engineers will use AI code assistants, up from less than 10% in early 2023, according to Gartner. Currently, 63% of organizations are piloting, deploying, or have already deployed AI code assistants based on their 2023 survey of 598 global respondents.

Companies are embracing this shift enthusiastically. Vibe coding prioritizes speed and employee satisfaction over rigid processes. Developers can ship features faster, prototype ideas quickly, and pivot easily when requirements change. This approach reduces initial costs by skipping heavy upfront planning and documentation while encouraging innovation and experimentation. The flexibility helps companies stay competitive by getting to market first, even though it creates technical debt later. Many businesses accept this trade-off because short-term advantages often outweigh long-term maintenance costs.

But there’s a critical problem hiding beneath this convenience.

Recent security research reveals a troubling reality: one in three AI-generated code snippets contains vulnerabilities. Academic studies show even higher rates, with over 60% of AI-written programs having security flaws. When developers embrace vibe coding and skip reviewing the generated code, they unknowingly introduce these risks into production systems.

The same technology that speeds up development may be quietly making software less secure. Understanding this risk isn’t just technical curiosity. It’s essential for anyone building or using modern software.

The question isn’t whether AI will reshape programming. It’s whether we can harness its power while protecting against its hidden threats.

Why AI Generates Insecure Code

Understanding why AI creates vulnerable software requires looking beyond the technology itself. The problem stems from fundamental limitations in how these models learn and operate.

Learning From Flawed Examples

AI code generators train on millions of code samples from public repositories like GitHub. This sounds impressive until you realize what’s actually in that data. These repositories contain decades of human-written code with known security flaws, outdated practices, and deprecated libraries. There’s no quality control or security screening process.

When an AI model encounters the same insecure pattern repeatedly in its training data, it learns to reproduce it. A Stanford University study found that developers using AI assistants introduced more security vulnerabilities than those coding manually.

Missing the Security Context

AI models excel at pattern matching but have difficulty with security reasoning. They can generate syntactically correct code that compiles and runs, but they can’t evaluate its security. Security requires understanding threats, attack vectors, and defensive programming techniques that go far beyond syntax.

For example, an AI might suggest using a deprecated encryption method because it appears frequently in training data, completely unaware that cryptographers abandoned it years ago due to known weaknesses. The model sees popular patterns, not security implications.

The Numbers Don’t Lie

Research consistently shows concerning vulnerability rates in AI-generated code:

- Approximately 40% of GitHub Copilot outputs contained vulnerabilities from the industry’s top 25 most dangerous software weaknesses

- Studies found 68-73% of code samples from popular AI tools contained security flaws when manually reviewed

- Analysis of real-world AI-generated code showed 32.8% of Python and 24.5% of JavaScript snippets contained known vulnerabilities

Speed Over Security

AI models optimize for functionality and speed, not security. They generate code that works quickly rather than code that works safely. This aligns perfectly with business pressures for rapid development, creating a dangerous feedback loop.

The Trust Paradox

Here’s the most troubling finding: while 59% of developers express security concerns about AI-generated code, 76% believe AI tools produce more secure code than humans. This misplaced confidence means developers are less likely to scrutinize AI output, making security reviews even more critical.

Why This Matters

These aren’t abstract technical problems. When AI generates insecure code and developers trust it without review, real applications inherit these vulnerabilities. Understanding these fundamental limitations is the first step toward using AI safely in software development.

The solution isn’t abandoning AI tools but recognizing their blind spots and building appropriate safeguards around them.

Recommended Reading

I, Robot + NIST AI RMF = Complete Guide on Preventing Robot Rebellion

The Tea App Disaster: A Perfect Example

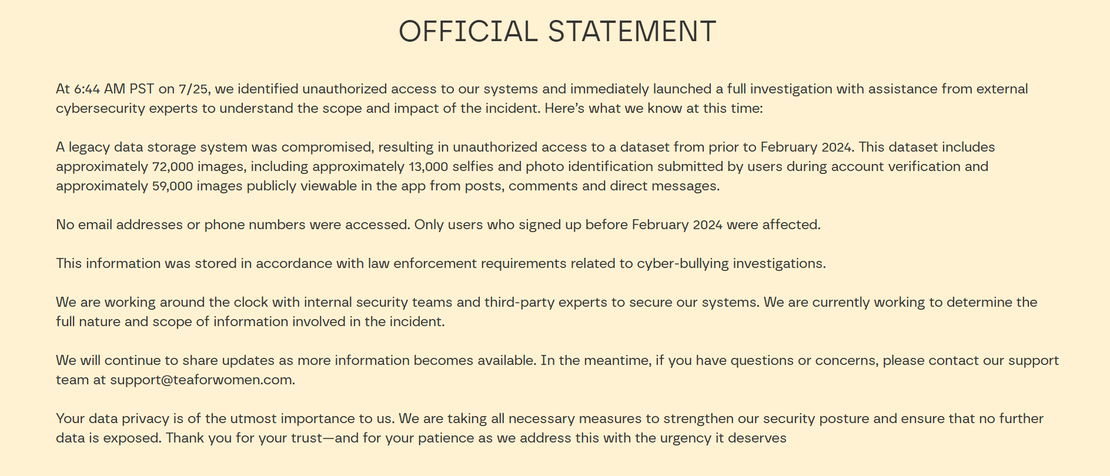

On July 25, 2025, Tea App made a devastating announcement: they had been “hacked.” According to their official statement, unauthorized access occurred at 6:44 AM PST, compromising a legacy data storage system. The breach exposed approximately 72,000 images, including 13,000 government ID photos from user verification and 59,000 publicly viewable images from posts and messages.

The company initially claimed only users who signed up before February 2024 were affected, with no email addresses or phone numbers accessed. However, independent investigations revealed the data included direct messages from the previous week, contradicting the company’s timeline.

This Wasn’t Even a Hack

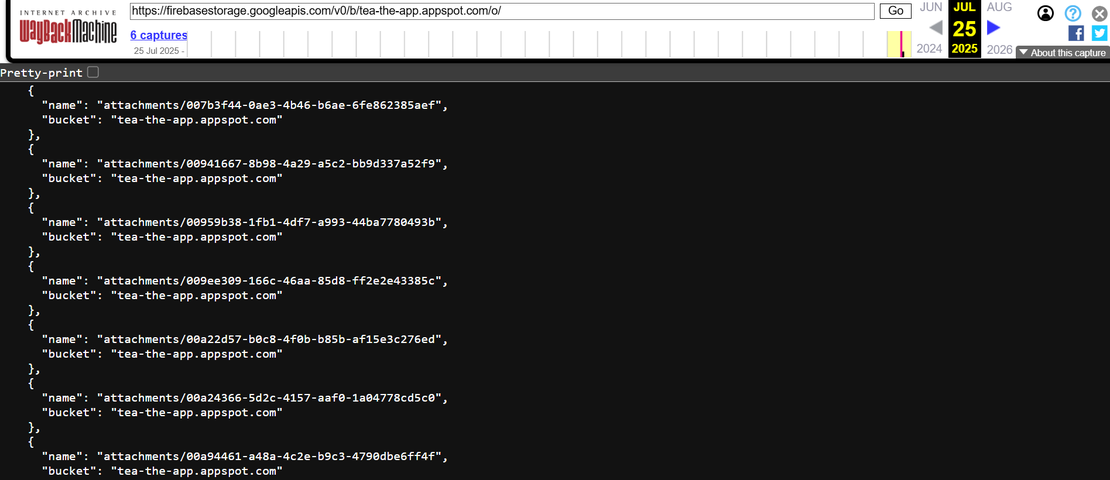

Here’s the shocking truth: nobody actually “hacked” Tea App. Security experts discovered that the Firebase storage system was left completely open with default settings. As investigators noted, “They literally did not apply any authorization policies onto their Firebase instance.”

Anyone could access the entire database using a simple Python script. The exposed Firebase endpoint was archived at:

https://firebasestorage.googleapis.com/v0/b/tea-the-app.appspot.com/o/

The app stored everyone’s photos and government IDs unencrypted in a public storage bucket. There were no access controls, no rate limiting on admin panels, and no basic security protections.

The Vibe Coding Connection

Security experts quickly identified this as a textbook example of vibe coding gone wrong. The Flutter app was built by a developer with just six months of programming experience, using what appeared to be fast, AI-assisted development with minimal technical oversight.

The telltale signs were everywhere:

- Default configurations: Firebase storage used insecure default settings

- No security layers: Missing encryption, access controls, and authentication

- Speed over safety: Quick deployment without understanding security implications

- Inexperienced oversight: Built without proper security expertise

The Cascading Disaster

The breach happened in two waves:

First breach:

- 72,000 user images exposed

- 13,000 government ID verification photos

- 404 Media reported that 4chan users were sharing personal data and selfies after discovering the exposed database

Second breach:

- Over 1.1 million private messages exposed

- Happened shortly after the first incident

- Tea disabled messaging features in response

Many photos contained EXIF location data, meaning user locations were also compromised and spread across anonymous forums.

Why This Matters for Vibe Coding

Tea App’s disaster perfectly illustrates the hidden dangers of vibe coding. When developers rely heavily on AI-generated code without understanding security principles, they inherit both functionality and vulnerabilities. The pressure to ship quickly, combined with limited security knowledge, created a perfect storm.

This wasn’t a sophisticated cyberattack. It was the predictable result of treating security as an afterthought in a world where AI makes it easier than ever to build functional but fundamentally insecure applications.

The Tea App breach serves as a stark warning: in the age of AI-assisted development, understanding what your code actually does has never been more critical.

Recommended Reading

The Largest Data Breach Ever? How Hackers Stole 16 Billion Credentials

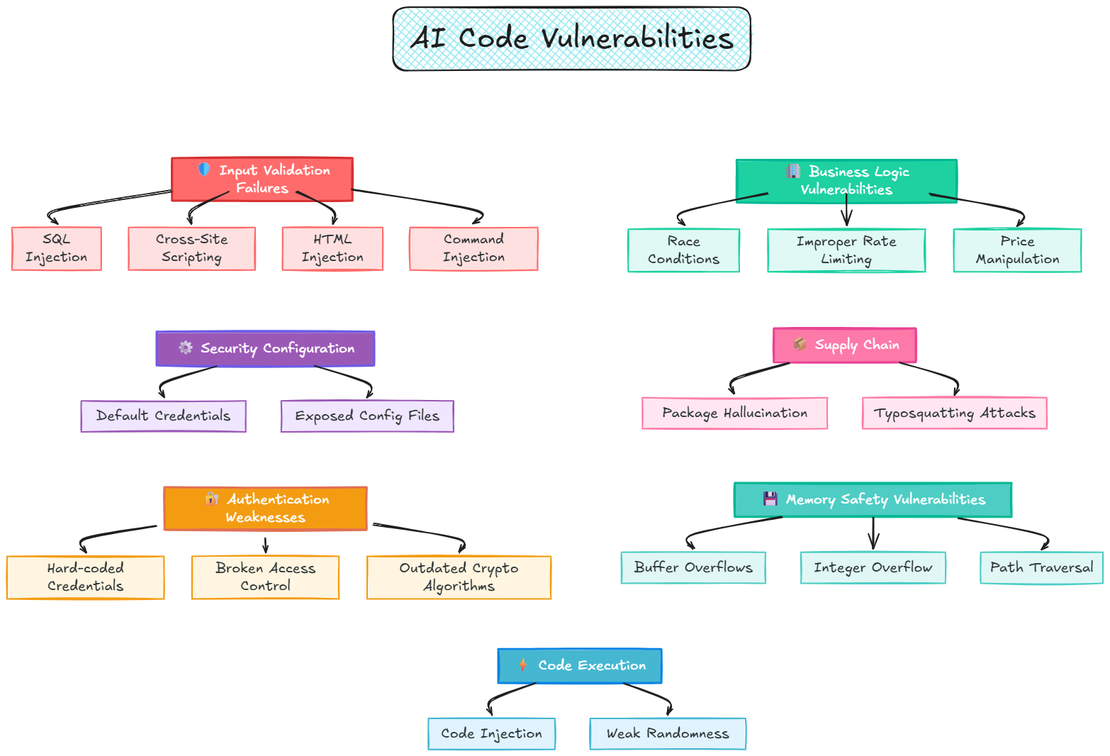

Common Vulnerabilities in AI-Generated Code

AI-assisted development amplifies these security threats when developers code rapidly without understanding security implications. These gaps represent real dangers that attackers exploit daily. The vulnerabilities outlined below are based on my hands-on experience using LLMs for vibe coding apps and other experimental projects.

Input Validation Failures

SQL Injection happens when user input reaches database queries without filtering. AI models frequently generate unparameterized queries, allowing attackers to manipulate databases and steal data.

Cross-Site Scripting (XSS) occurs when applications display unsanitized user input. Malicious scripts execute in victims’ browsers, stealing sessions or performing unauthorized actions.

Command Injection through unsafe system calls lets attackers execute system commands through vulnerable input fields, potentially destroying server data or gaining system access.

HTML Injection enables content manipulation by inserting malicious HTML, leading to phishing attacks.

Memory Safety Vulnerabilities

Buffer Overflows and Memory Corruption, especially in C/C++, through unsafe functions like scanf, sprintf, and strcpy, which AI models consistently generate without proper bounds checking.

Integer Overflow leading to unexpected behavior and memory corruption when AI generates arithmetic operations without validation.

Path Traversal vulnerabilities allowing unauthorized file access through improperly validated file paths.

Code Execution Risks

Code Injection through dynamic execution and

evalstatements that AI models suggest for flexibility without considering security implications.Weak Randomness in security-critical functions using predictable generators instead of cryptographically secure alternatives.

Authentication and Authorization Weaknesses

Hard-coded Credentials and secrets embedded directly in source code, a pattern AI models frequently reproduce from training data.

Weak Password Policies allow easily guessable passwords, making brute force attacks successful.

Broken Access Controls let users access unauthorized resources by manipulating URLs or parameters.

Outdated Cryptographic Algorithms like MD5 provide false security that modern computing can easily crack.

Security Configuration Mistakes

Default Credentials remain unchanged in production, giving attackers easy access through known passwords like admin/admin.

Missing Security Headers leave browsers unable to distinguish legitimate from malicious content, enabling various attacks.

Exposed Configuration Files (.env, .DS_Store, .git) leak database passwords, API keys, and system architecture details.

Data Exposure Risks

Exposed APIs without authentication leak sensitive user information, creating privacy breaches.

Sensitive Data in URLs and Logs makes confidential information permanently visible in browser history and server logs.

Information Leakage through detailed error messages reveals system architecture and helps attackers plan targeted attacks.

Business Logic Vulnerabilities

Race Conditions allow multiple users to exploit the same resource simultaneously, like purchasing limited inventory multiple times.

Improper Rate Limiting enables automated attacks with thousands of rapid login attempts or API calls.

Price Manipulation exploits flawed checkout processes where users modify prices through browser tools.

Modern Supply Chain Threats

Package Hallucination creates a dangerous new attack vector. Research shows 5% of commercial AI-generated code contains non-existent package names. Attackers create malicious packages with these names, leading to automatic installation.

Typosquatting Attacks exploit common typing mistakes. Developers installing “bitensor” instead of “bittensor” unknowingly execute malicious code designed to steal cryptocurrency wallets.

Recent attacks prove this threat’s severity. In August 2025, criminals published five typosquatted packages targeting Bittensor users within 25 minutes, including variations like “bittenso” and “qbittensor” (source).

These vulnerabilities create a complex attack surface requiring comprehensive security measures. Understanding their mechanisms helps developers recognize threats and implement appropriate defenses against AI-amplified security risks.

Request Your Free 14-Day Trial

Submit a request to try Netlas free for 14 days with full access to all features.

Case Study: Insecure AI-Generated Code

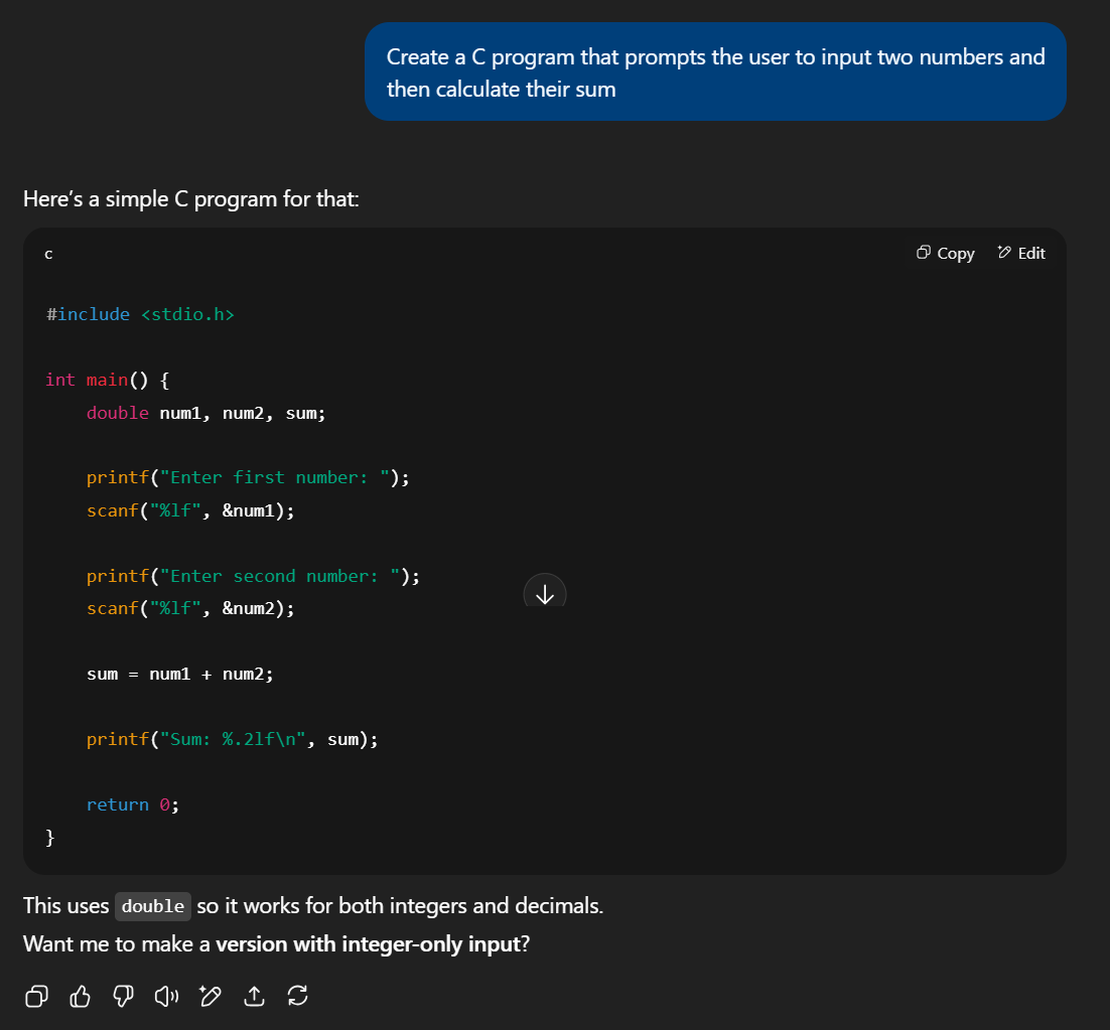

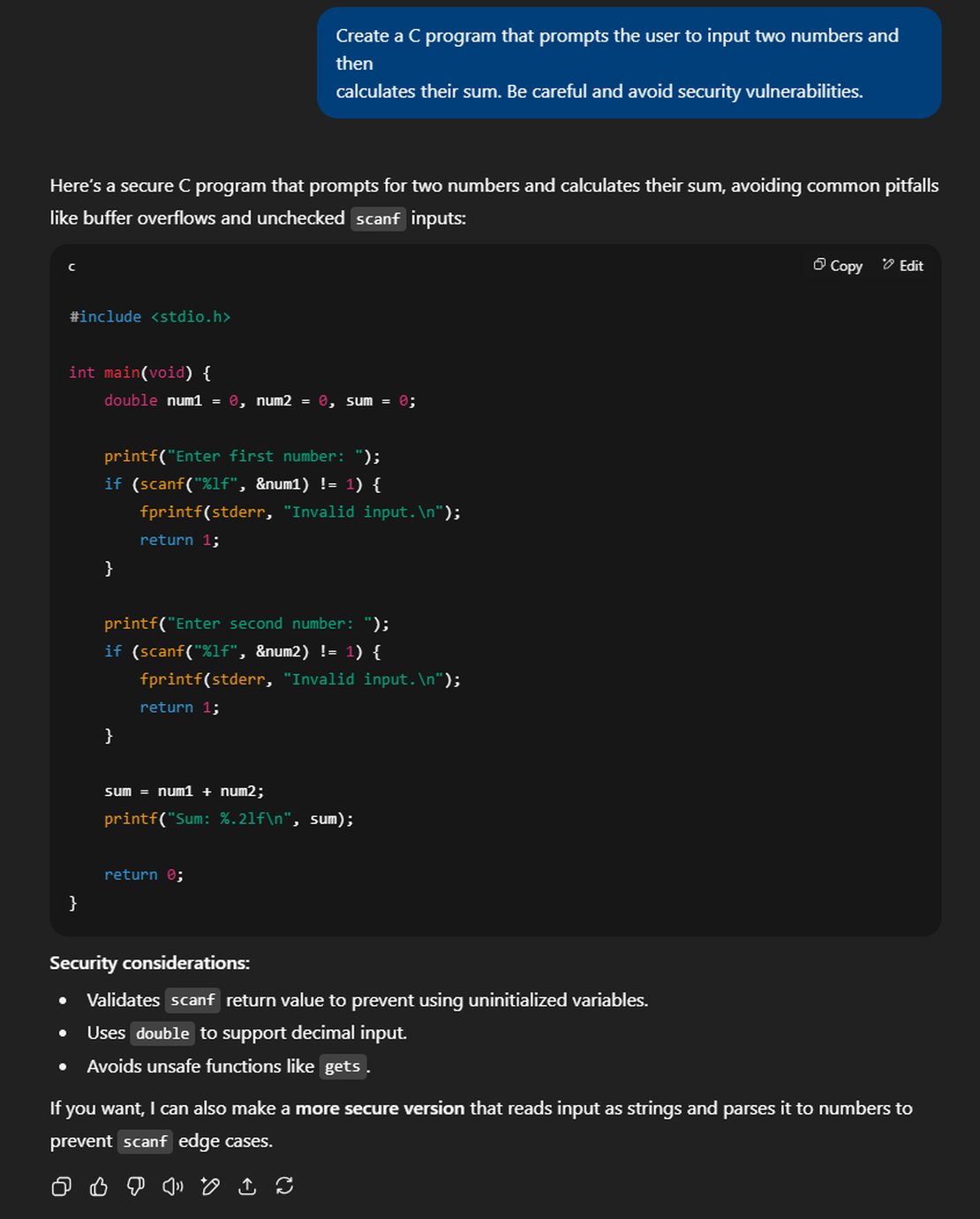

Let’s start with a simple example. Ask any AI model to “create a C program that adds two numbers,” and you’ll get something like this:

#include <stdio.h>

int main() {

double num1, num2, sum;

printf("Enter first number: ");

scanf("%lf", &num1);

printf("Enter second number: ");

scanf("%lf", &num2);

sum = num1 + num2;

printf("Sum: %.2lf\n", sum);

return 0;

}This code has two potential security vulnerabilities: buffer overflow and format string bugs that caused major incidents like the Morris Worm and Code Red.

The Morris Worm was the first major internet worm, created by Cornell student Robert Morris in 1988. A coding bug caused it to spread uncontrollably, infecting 10% of internet-connected computers and causing widespread system crashes.

This landmark cybersecurity incident led to the creation of CERT and made Morris the first person prosecuted under the Computer Fraud and Abuse Act. The worm marked the beginning of modern internet security awareness.

When AI “Tries” to Be Secure

When explicitly asked to create secure code, AI models often produce something like this:

#include <stdio.h>

int main(void) {

double num1 = 0, num2 = 0, sum = 0;

printf("Enter first number: ");

if (scanf("%lf", &num1) != 1) {

fprintf(stderr, "Invalid input.\n");

return 1;

}

printf("Enter second number: ");

if (scanf("%lf", &num2) != 1) {

fprintf(stderr, "Invalid input.\n");

return 1;

}

sum = num1 + num2;

printf("Sum: %.2lf\n", sum);

return 0;

}What the AI “Fixed”:

- Added input validation by checking

scanfreturn values - Initialized variables to prevent undefined behavior

- Added proper error handling and messaging

- Used

voidparameter formain()following best practices

What Still Remains Vulnerable:

Despite these improvements, the code still contains the critical buffer overflow vulnerability. Here’s why the “fix” doesn’t work:

The Persistent Buffer Overflow:

The scanf("%lf", &num1) calls remain fundamentally unsafe. The return value validation only checks if the conversion succeeded, but the buffer overflow occurs during the parsing phase, before any conversion happens.

Attack Scenario:

# This input still crashes the "secure" version:

Enter first number: AAAAAAAAAAAAAAAA[...10,000+ A's...]AAAAAAAAAAAAAAAA

# scanf tries to parse this massive string

# Internal buffers overflow during parsing

# Program crashes or becomes exploitable

# Return value check never executes because overflow happens firstWhy the Validation Doesn’t Help:

- Timing Issue: Buffer overflow occurs during

scanf’s internal string processing, before it attempts to convert to a double - Parser Vulnerability: The vulnerability lies in

scanf’s parsing logic, not in the conversion or return value - Memory Corruption: Even if

scanfreturns an error code, memory may already be corrupted - Exploitability: Attackers can still achieve code execution before the validation check runs

What the AI Validation Actually Prevents:

- Graceful handling of non-numeric input like “abc”

- Prevention of uninitialized variable usage

- Better error messages for users

- Stopping program execution on conversion failures

What It Doesn’t Prevent:

- Buffer overflow attacks through oversized input

- Memory corruption during parsing

- Code execution before validation occurs

- Format string attacks (still possible with crafted input)

This simplified example demonstrates a critical flaw in how AI models approach security. They apply surface-level fixes that address obvious programming errors while completely missing fundamental security vulnerabilities. The AI recognizes that input validation is important, but fails to understand that scanf itself is inherently unsafe, regardless of how its return value is handled.

The Scale of the Problem

Research examining real-world AI-generated code reveals alarming statistics from multiple comprehensive studies:

Prevalence: Studies show nearly 30% of AI-generated code snippets contain security weaknesses. Python shows 33% vulnerability rates, JavaScript 25%.

Diversity: These aren’t isolated issues. Research found 544 security problems across 38 different vulnerability types in just 452 code samples.

Consistency: Analysis indicates every major AI model introduces vulnerabilities in at least 50% of generated C code. No model performs acceptably from a security standpoint.

Cross-Platform Impact: The problem spans multiple programming languages and development environments, with GitHub Copilot research showing 40-44% vulnerability rates in security-relevant scenarios.

Why This Matters More Than You Think

The security implications extend far beyond individual programs:

False Security Confidence: Developers often assume AI-generated code follows best practices, leading to reduced scrutiny and security review of potentially dangerous code.

Scale Effect: As AI code generation becomes mainstream, vulnerable patterns spread exponentially across the software ecosystem, creating systemic risks.

Context Sensitivity: Research demonstrates that minor prompt changes dramatically affect security outcomes. Adding or removing a single comment can shift vulnerability rates by 20% or more, making secure code generation unpredictable.

Supply Chain Risks: Vulnerable AI-generated code in libraries and frameworks can affect thousands of downstream applications, multiplying the impact of each security flaw.

What This Means for Software Security

We’re entering an era where insecure code can be generated faster than ever before. Traditional security practices may be insufficient for the AI-driven development explosion. Organizations adopting AI tools without corresponding security measures risk creating vulnerable systems at a massive scale, systematically reintroducing historically devastating vulnerabilities into modern codebases.

Recommended Reading

Bug Bounty 101: The Best Courses to Get Started in 2025

Securing Vibe Coding

Rapid development, including vibe coding, doesn’t need new defenses. Moving fast amplifies familiar risks, especially with AI-suggested code that lands in the production environment with minimal validation. Everything I can suggest for this case is proven secure practices with only one addition: a review that checks vibe-coded parts against the earlier listed pitfalls. Treat vibe-coded output like untrusted third-party code – verify, constrain, monitor.

Security Foundation

Secret Management:

- Store API keys in encrypted managers like AWS Secrets Manager

- Never commit credentials to repositories

- Exposed secrets lead to account takeovers and data breaches within hours

Database Safety:

- Use ORMs and parameterized queries only

- Set up automated backups because ransomware attacks target databases first

- SQL injection attacks can dump entire databases or delete all your data

Infrastructure Protection:

- Enable TLS everywhere with automatic certificate renewal

- Use TLS 1.2+ with strong cipher suites

- Unencrypted traffic gets intercepted and modified by attackers

Frontend Security

Force HTTPS Connections:

- Redirect all HTTP traffic to HTTPS

- Set HSTS headers

Input Validation and Sanitization:

- Filter and encode all user input on both client and server

- Implement Content Security Policy (CSP) headers

- Cross-site scripting (XSS) attacks inject malicious code that steals user accounts and spreads to other visitors

Client-Side Secret Protection:

- Never store API keys in JavaScript code or browser storage

- Use HTML sanitization libraries with whitelists

- These secrets are visible to anyone who views your page source, leading to API abuse and billing fraud

CSRF Prevention:

- Use anti-CSRF tokens and SameSite cookies for state-changing requests

- Implement proper CORS policies with specific origins

- Without protection, attackers can submit forms on behalf of logged-in users, changing passwords or making purchases without consent

Backend Security

Strong Authentication:

- Use proven libraries for login systems and hash passwords with bcrypt

- Enable MFA for admin accounts because single passwords always get compromised

- Weak authentication leads to account takeovers

Authorization Checks:

- Verify permissions at every sensitive endpoint

- Use role-based access control (RBAC)

- Missing authorization lets attackers access other users’ data or admin functions just by changing URL parameters

API Protection:

- Authenticate all sensitive endpoints and configure CORS with specific origins

- Monitor API usage for abuse patterns

Security Headers:

- Set Content Security Policy to block inline scripts and X-Frame-Options to prevent clickjacking

- Set HttpOnly and Secure cookie flags

- Without headers, attackers inject malicious code or trick users into clicking hidden buttons

System & Infrastructure Security

Configuration Security:

- Never expose config files or debug info to users

- Use secure defaults and disable unnecessary features

- Configuration exposure reveals system internals that help attackers plan attacks

Buffer Overflow Prevention:

- Use memory-safe languages when possible

- Implement bounds checking for all buffer operations

- Buffer overflows can lead to complete system compromise and arbitrary code execution

Race Condition Mitigation:

- Implement proper synchronization mechanisms

- Use database transactions with appropriate isolation levels

- Race conditions can lead to data corruption and privilege escalation attacks

Path Traversal Protection:

- Validate and sanitize all file paths

- Store uploads outside web root

- Path traversal attacks can expose sensitive system files and configuration data

Input Validation & Injection Prevention

Command Injection Defense:

- Never pass user input to system commands

- Use safe APIs instead of shell execution

- Command injection can lead to complete server compromise and data theft

HTML Injection Mitigation:

- Use HTML sanitization libraries with whitelists

- Use auto-escaping template engines

- HTML injection can lead to account takeover and malware distribution

Operations Security

Dependency Management:

- Scan dependencies regularly and keep them updated

- Monitor for typosquatting attacks

- Vulnerable libraries are the most common attack vector. Attackers exploit known flaws to gain server access

Error Handling:

- Log errors securely but never show stack traces to users

- Use generic error messages

- Error messages reveal system internals that help attackers find vulnerabilities and plan attacks

Session Security:

- Use HttpOnly and Secure cookie flags. Rotate session IDs after login

- Implement appropriate session timeouts

- Weak session management lets attackers hijack user accounts by stealing cookies

File Upload Safety:

- Validate file types and sizes strictly. Store uploads outside the web root directory

- Use allow-lists for permitted file types

- Malicious file uploads can install backdoors, serve malware, or crash your servers

Rate Limiting:

- Add limits to login attempts and sensitive operations

- Use progressive delays for failed attempts and implement CAPTCHA for suspicious activity

- Without throttling, attackers brute force passwords, abuse APIs for profit, or crash servers with request floods

Remember: Security is not a one-time implementation but an ongoing process. Regularly review and update these practices as new threats emerge and your application evolves.

Conclusion

AI coding tools are incredibly helpful and can speed up development, but they come with hidden risks. These tools often introduce security vulnerabilities that seem harmless at first but can lead to serious problems later.

Just because your code works doesn’t mean it’s safe from attacks. The security issues we covered above are common in AI-generated code, so it’s important to review everything carefully. Before deploying any AI-assisted code, make sure to check it against the security checklist above - a little extra time now can prevent major headaches down the road.

I can show you how deep the Internet really goes

Discover exposed assets, infrastructure links, and threat surfaces across the global Internet.

Related Posts

August 8, 2025

I, Robot + NIST AI RMF = Complete Guide on Preventing Robot Rebellion

June 20, 2025

AI-Driven Attack Surface Discovery

June 10, 2025

How to Detect CVEs Using Nmap Vulnerability Scan Scripts

November 21, 2025

From Starlink to Star Wars - The Real Cyber Threats in Space

June 8, 2025

Best Honeypots for Detecting Network Threats

April 3, 2026

Using OWASP Amass with Netlas Module